A Deep Look at Retrieval, Trust Signals, and Why Visibility Still Starts at the Top

If you ask ChatGPT a question today, you get an answer.

If you ask Google, you might get an AI Overview.

If you ask Perplexity, you get citations stacked neatly under each paragraph.

But here’s the question most people never stop to ask:

How do these systems decide what to cite?

Why does Google AI Overview overwhelmingly reference pages that already rank in the top 10?

Why does Perplexity overlap with top-10 Google results about 91 percent of the time?

Why does ChatGPT only overlap about 14 percent of the time?

If AI search feels magical, it’s because most people never look behind the curtain.

Let’s pull it back.

The Myth: AI Search Replaced Google

There is a popular narrative that AI search engines have replaced traditional search engines.

That is not how it works.

AI systems did not eliminate search infrastructure.

They layered language generation on top of it.

Underneath every AI answer is a retrieval process.

Retrieval determines what documents get pulled into the system.

Only after retrieval does generation happen.

If retrieval is biased toward high-authority, high-ranked, well-indexed pages, then citations will reflect that bias.

Which is exactly what we are seeing.

Step 1: Retrieval Is the Real Gatekeeper

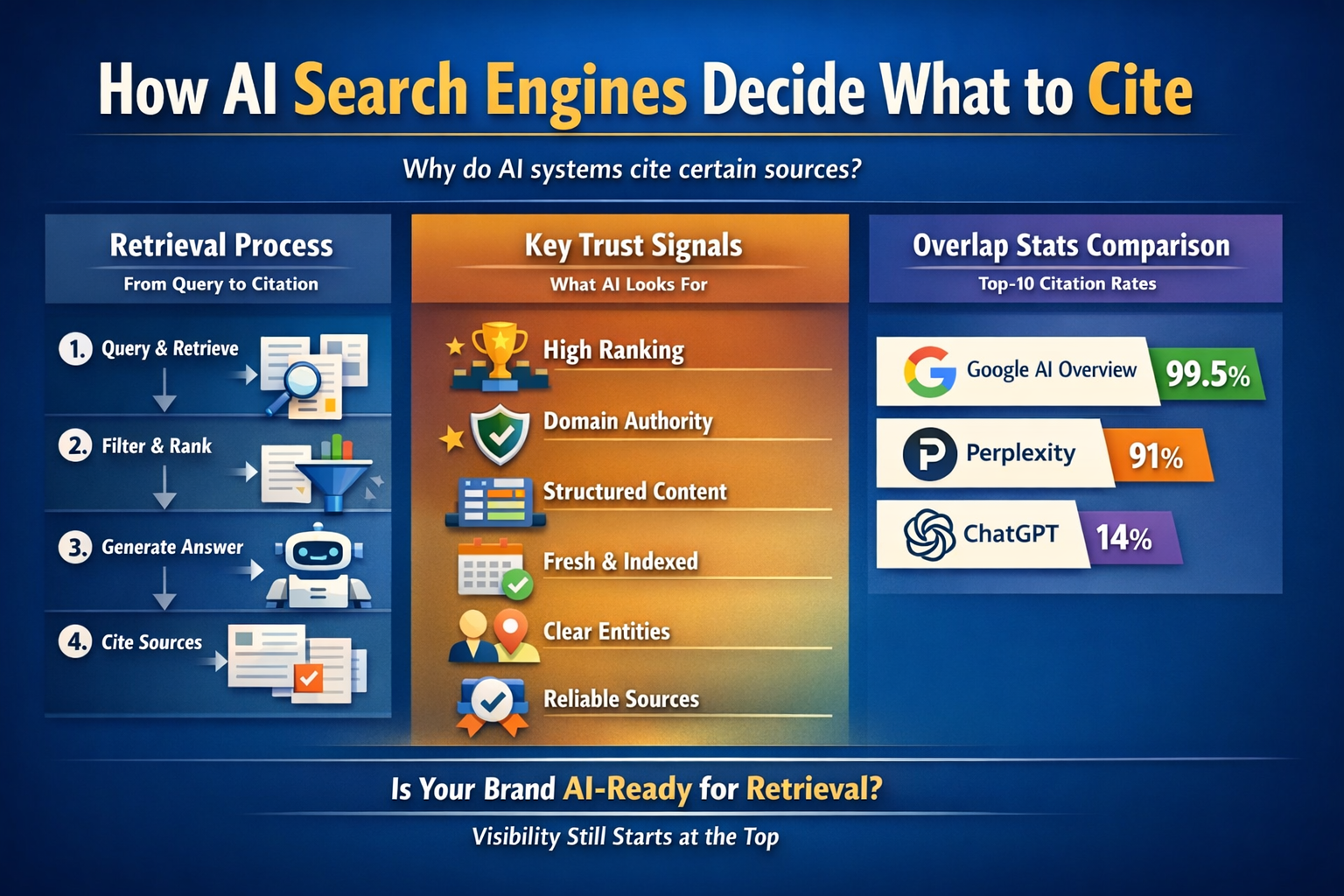

Every AI search engine follows some variation of this flow:

- User submits a query.

- The system performs retrieval.

- Retrieved documents are ranked or filtered.

- The LLM synthesizes an answer.

- Citations are attached to supporting sources.

The critical step is retrieval.

The language model does not browse the internet in real time in a human way.

It queries a retrieval layer.

That retrieval layer might rely on:

- A search index

- A proprietary crawl

- A partner search API

- A hybrid knowledge graph

- Vector similarity search

- Cached snapshots

- Structured entity databases

Retrieval narrows the universe.

Generation simply explains what retrieval provided.

This is why most AI answers feel aligned with what is already visible on the internet.

Because they are.

Why 99.5 Percent of Google AI Overview Sources Come from Top 10 Results

This number surprises people. It should not.

Google AI Overview is not an independent brain floating above search results.

It is built on Google’s ranking system.

If a page is already in the top 10, it has:

- Strong link signals

- Topical authority

- Structured content

- Crawl stability

- High trust metrics

- Fresh indexing

- Proven relevance

Why would Google’s AI pull from page 27 instead?

It rarely does.

AI Overview appears to draw overwhelmingly from pages that Google’s ranking system already trusts.

This tells us something important.

AI answers are not bypassing SEO.

They are compressing it.

Instead of 10 blue links, you now get a synthesized paragraph drawn from those same 10 blue links.

The pipeline changed.

The trust model did not.

Why Perplexity Overlaps with Top 10 Results 91 Percent of the Time

Perplexity presents itself as an AI-first search engine.

But its citation behavior reveals something fascinating.

When you analyze citation overlap, about 91 percent of Perplexity’s cited sources come from Google’s top 10 results for the same query.

That suggests Perplexity’s retrieval layer heavily overlaps with conventional search rankings.

Why?

Because top 10 results are:

- Already optimized for clarity

- Already structured for crawlability

- Already authority-weighted

- Already contextually aligned with the query

Perplexity’s model likely uses a hybrid approach:

- Traditional search index

- Vector similarity scoring

- Real-time ranking signals

- Domain trust heuristics

So even though it feels independent, it is structurally anchored to high-ranking content.

In practical terms, if your page is not already visible in traditional search, Perplexity is statistically unlikely to surface you.

That should reshape how we think about AI search optimization.

Why ChatGPT Only Overlaps 14 Percent of the Time

This is where things get interesting.

ChatGPT’s citation overlap with Google’s top 10 is much lower.

Around 14 percent.

Why?

Because ChatGPT’s retrieval architecture is different.

ChatGPT operates in a hybrid model:

- Pre-trained knowledge

- Partnered browsing layer

- Selective search integrations

- Cached snapshots

- Retrieval augmented generation systems

It does not rely as tightly on Google’s top 10 list.

Sometimes it draws from:

- Authoritative domains not currently ranking high

- Aggregated datasets

- Knowledge graph entries

- Internal training patterns

- Structured databases

That explains the lower overlap.

But lower overlap does not mean random selection.

It still prefers:

- High domain authority

- Clear structured pages

- Well-organized content

- Trusted sources

- Consistent entity signals

The trust layer remains intact.

It is simply broader.

The Trust Signals AI Systems Look For

AI retrieval systems rely on signals.

Not feelings. Not creativity. Signals.

These signals include:

1. Ranking Authority

If a search engine already ranks a page highly, that is a powerful proxy for trust.

This explains Google AI Overview behavior.

2. Domain Authority

Well-known domains get preferential treatment.

Why?

Because they are stable, frequently crawled, and rarely spammy.

3. Content Structure

AI systems prefer:

- Clear headings

- Defined sections

- FAQ blocks

- Lists

- Tables

- Concise paragraphs

- Declarative statements

Unstructured walls of text are harder to retrieve cleanly.

4. Entity Clarity

Does the page clearly define:

- Who

- What

- Where

- How

- Why

Does it unambiguously describe the brand, service, or concept?

Entity confusion reduces citation likelihood.

5. Freshness and Index Stability

Recently indexed, consistently crawled pages are easier to trust.

If Google cannot crawl it reliably, AI will struggle too.

6. Citation Behavior Patterns

AI systems may reinforce patterns:

If certain domains are frequently cited in similar queries, they become default references.

Trust compounds.

Retrieval Mechanics in Simple Terms

Let’s simplify how retrieval might work.

A query is transformed into embeddings.

Embeddings are numerical representations of meaning.

The system searches for documents whose embeddings are closest to that query.

It then filters those documents by:

- Relevance score

- Authority score

- Freshness

- Domain trust

- Spam filters

- Query intent match

The top documents are passed to the LLM.

The LLM synthesizes.

Citations are attached to sentences derived from those documents.

Notice something.

At no point does the model randomly browse.

It selects from a constrained pool.

Which means visibility still begins before the AI layer.

Why Most Businesses Will Not Be Cited

Here is the uncomfortable truth.

Most business websites are:

- Poorly structured

- Thin on clear entity definitions

- Lacking schema markup

- Missing FAQ clusters

- Missing canonical definitions

- Poorly interlinked

- Inconsistent in messaging

- Weak in authority signals

Even if they are good businesses.

AI systems do not evaluate your service quality directly.

They evaluate digital clarity.

If your digital representation is messy, retrieval probability drops.

Which means generation never sees you.

Which means citation never happens.

The Compounding Effect of Top-10 Bias

When 99.5 percent of Google AI Overview sources come from top-10 pages, something interesting happens.

The top pages become even more dominant.

Because:

- AI references them.

- Users see them.

- They gain more traffic.

- They gain more links.

- They strengthen authority.

- They remain top ranked.

- AI continues citing them.

This is feedback loop amplification.

AI search does not flatten the field.

It often reinforces existing authority hierarchies.

Which means new brands need strategic positioning to enter the retrieval pool.

The Misconception About “Being Optimized for AI”

Many founders now ask:

“How do I optimize for AI search?”

The wrong answer is:

“Write for ChatGPT.”

The correct answer is:

“Optimize for retrieval.”

Which means:

- Clear entity modeling

- Structured schema

- Strong authority signals

- Topical clustering

- Internal linking

- Consistent canonical representation

- High crawl stability

AI systems reward clarity.

They reward structure.

They reward authority signals.

They reward definitional strength.

They do not reward clever prompts on your own site.

The Visibility Angle Emerging

If AI retrieval leans heavily on:

- Top-10 ranked pages

- Structured content

- Strong authority domains

- Clear entity definitions

Then businesses must ask:

Is our brand structured in a way that retrieval engines can easily understand?

Not:

Are we creative?

Not:

Are we writing long blog posts?

But:

Are we clearly defined?

Is our business entity coherent?

Are our services explicitly structured?

Do we present FAQs in clean machine-readable formats?

Is our knowledge centralized and consistent?

If not, retrieval probability decreases.

This is where the second phase of AI search optimization emerges.

Not just ranking.

But structured knowledge clarity.

Why Overlap Percentages Matter

Let’s revisit those numbers:

Google AI Overview → 99.5 percent from top 10

Perplexity → 91 percent overlap with top 10

ChatGPT → 14 percent overlap

What do these numbers teach us?

- AI systems are not independent from traditional ranking systems.

- Authority still matters.

- Structure still matters.

- Visibility is still earned.

- Retrieval diversity varies by platform.

This means optimization cannot be platform-specific only.

It must be:

Entity-specific.

Structure-specific.

Authority-aligned.

What Businesses Should Focus on Now

If I were advising a brand preparing for AI search visibility, I would prioritize:

- Structured service pages.

- Defined FAQ sections.

- Clear comparison content.

- Strong internal linking.

- Consistent brand entity descriptions.

- Schema implementation.

- Crawl stability.

- Canonical clarity.

Not hype.

Not trend chasing.

Not “AI keywords.”

Clarity.

The Strategic Opportunity

AI search is not eliminating SEO.

It is compressing it into a citation layer.

Which creates a new opportunity.

Brands that:

- Standardize their knowledge representation

- Centralize their entity definitions

- Ensure consistent structured output

- Maintain AI-friendly documentation

- Monitor citation patterns

Will outperform those who treat AI search as a novelty.

The next phase of search visibility is not about ranking higher.

It is about being retrievable.

Being structured.

Being trusted.

Being clear.

Final Thought

AI search engines do not randomly decide what to cite.

They retrieve.

They filter.

They synthesize.

They cite from what retrieval provides.

If retrieval favors authority, structure, and clarity, then that is where your effort must go.

The conversation should no longer be:

Which AI tool should I use?

It should be:

Is my brand structured in a way that AI systems can reliably retrieve and trust?

That is the real shift happening right now.

And most businesses have not realized it yet.